Artificial Intelligence (AI) is a rapidly evolving field of computer science that focuses on creating machines capable of performing tasks that normally require human intelligence. This includes learning from data, recognizing patterns, making decisions, and understanding language. Today, AI powers many everyday technologies, from voice assistants and recommendation systems to autonomous vehicles and advanced healthcare tools.

The concept of AI has developed significantly since the early ideas proposed by computer scientist Alan Turing, who explored whether machines could think like humans. Modern AI systems rely on technologies such as Machine Learning, Deep Learning, and Natural Language Processing to analyze data and automate complex tasks.

In this guide, we provide a complete overview of artificial intelligence, including its definition, history, types, real-world applications, benefits, challenges, and future potential.

What is Artificial Intelligence?

Artificial Intelligence (AI) refers to the ability of machines or computer systems to perform tasks that normally require human intelligence. These tasks include learning from data, recognizing patterns, solving problems, understanding language, and making decisions. In simple terms, AI enables computers to think, analyze information, and improve their performance over time.

The goal of AI is to create systems that can simulate human cognitive abilities and assist people in complex tasks. Modern AI technologies are used in many everyday applications, from search engines and recommendation systems to voice assistants and self-driving cars.

AI systems rely on advanced techniques such as Machine Learning, Deep Learning, and Natural Language Processing to analyze large amounts of data and identify patterns. These technologies allow machines to continuously improve their performance without being explicitly programmed for every situation.

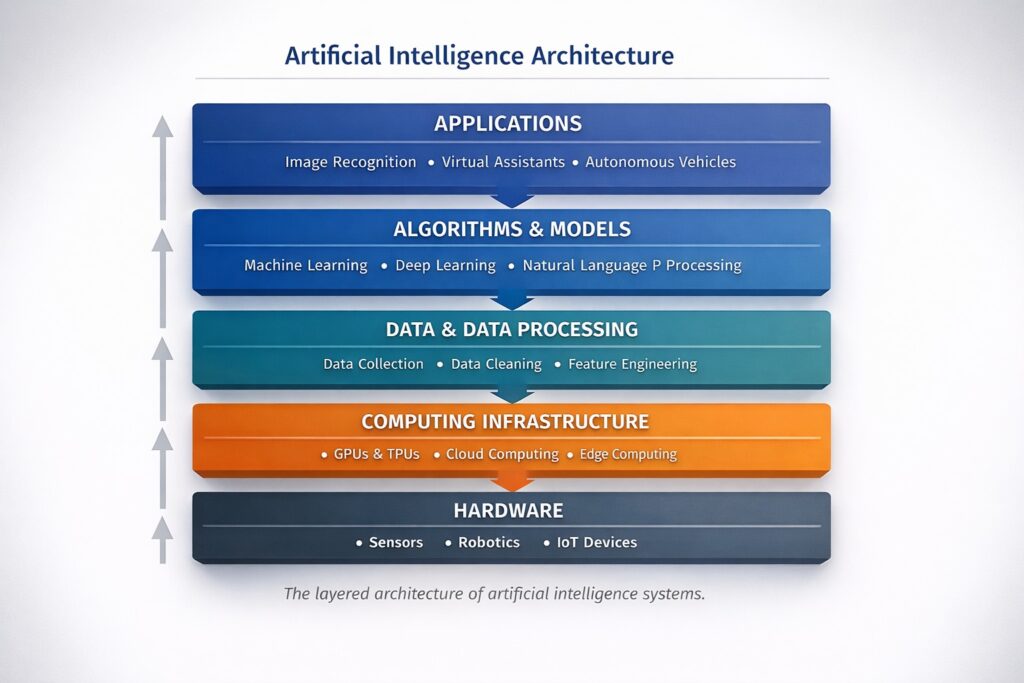

Caption: Diagram explaining the core structure and technologies of artificial intelligence.

Purpose of Artificial Intelligence

The main purpose of artificial intelligence is to make machines capable of performing tasks more efficiently and accurately. AI helps automate repetitive work, analyze large datasets, and support decision-making processes in different industries such as healthcare, finance, transportation, and marketing.

For example, companies like Google and Microsoft use AI systems to improve search results, automate customer support, and deliver personalized recommendations.

How Artificial Intelligence Mimics Human Intelligence

Artificial intelligence mimics human intelligence by processing information in ways similar to how the human brain works. AI systems learn from data, analyze patterns, and make predictions based on previous experiences.

Some key abilities that allow AI to imitate human thinking include:

- Learning: AI systems learn from data using algorithms and models that improve accuracy over time.

- Reasoning: AI analyzes information and applies logical rules to reach conclusions.

- Problem Solving: AI can identify patterns in complex data and generate solutions to difficult problems.

- Decision Making: AI evaluates multiple possibilities and selects the most effective outcome based on available data.

These capabilities allow AI systems to perform tasks that previously required human intelligence, making artificial intelligence one of the most important technologies shaping the future of digital innovation.

Learn more about Top 10 Generative AI Tools in 2026.

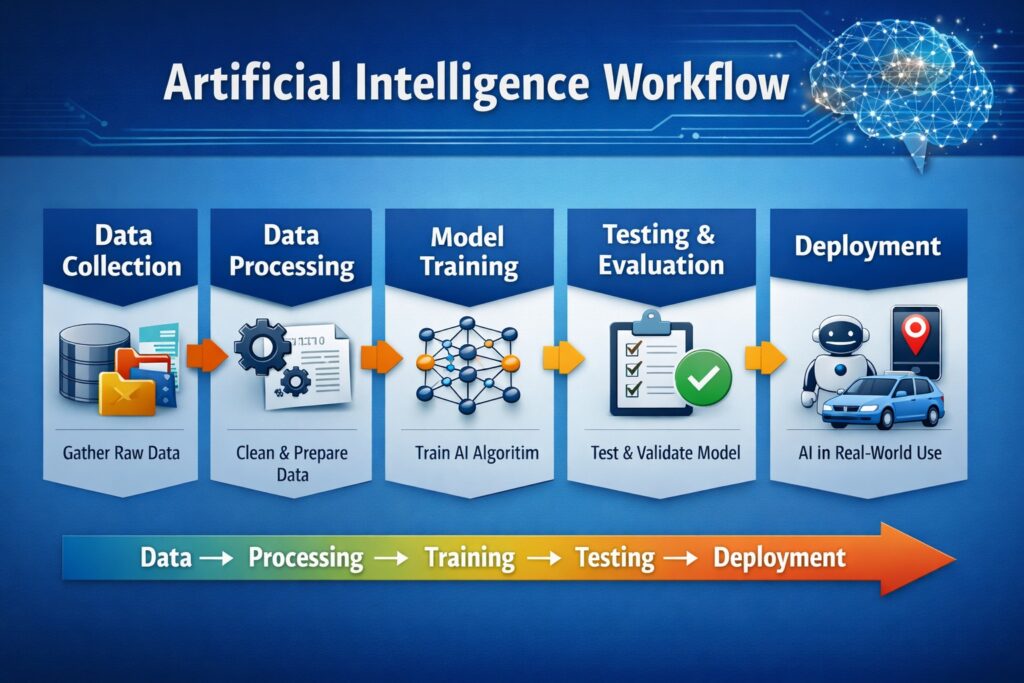

How Artificial Intelligence Works (Step-by-Step)

Artificial Intelligence works by combining large amounts of data with advanced algorithms that allow computers to learn patterns, make predictions, and improve performance over time. Modern AI systems rely heavily on technologies like Machine Learning, Deep Learning, and Neural Network models to process information and automate decision-making.

Below is a simplified step-by-step explanation of how AI systems typically operate.

1. Data Collection

The first step in any AI system is collecting large amounts of data. This data can include images, text, videos, numbers, or user interactions. The quality and quantity of data play a major role in determining how accurate an AI model will be.

For example, voice assistants collect speech data to improve language recognition systems.

2. Data Processing

Once the data is collected, it must be cleaned and organized. This process removes errors, duplicates, or irrelevant information so the AI model can learn from reliable data.

Data preprocessing may include:

- Removing noise from datasets

- Converting text or images into machine-readable formats

- Structuring data for training algorithms

3. Model Training

During the training phase, algorithms analyze the dataset and learn patterns within the information. AI models repeatedly process data and adjust their internal parameters to improve accuracy.

This is where techniques like Deep Learning and neural networks become essential for recognizing complex patterns.

4. Testing and Evaluation

After training, the AI model is tested using new data to evaluate how well it performs. Developers measure accuracy, precision, and error rates to determine whether the system is ready for real-world use.

If the performance is not satisfactory, the model may be retrained with improved datasets.

5. Deployment and Real-World Use

Once the model performs well, it is deployed in real applications such as:

- Virtual assistants

- recommendation systems

- fraud detection systems

- autonomous vehicles

Companies like Google and Microsoft use AI systems to power search engines, cloud platforms, and intelligent automation tools.

History of Artificial Intelligence

The history of Artificial Intelligence (AI) dates back to the early ideas of creating machines that could simulate human intelligence. Over the decades, advances in computing power, data availability, and algorithms have transformed AI from a theoretical concept into one of the most influential technologies in the modern world.

The foundations of artificial intelligence were influenced by the work of British mathematician and computer scientist Alan Turing, who proposed the idea that machines could potentially think like humans. His famous Turing Test became an early benchmark for evaluating whether a machine could demonstrate intelligent behavior.

Today, AI technologies such as Machine Learning, Deep Learning, and Natural Language Processing are used in countless applications, from healthcare and finance to autonomous vehicles and intelligent assistants.

Timeline of Artificial Intelligence Development

Understanding the major milestones in AI development helps explain how this technology has evolved over time.

1950 – The Beginning of AI Concepts

Computer scientist Alan Turing published a groundbreaking paper titled “Computing Machinery and Intelligence.” In this work, he introduces the concept of the Turing Test to determine whether machines can exhibit intelligent behavior similar to humans.

1956 – Birth of Artificial Intelligence as a Field

The term “Artificial Intelligence” was officially introduced during the Dartmouth Conference, which is considered the starting point of AI as an academic discipline.

1997 – AI Defeats a World Chess Champion

A major breakthrough occurred when a supercomputer developed by IBM called Deep Blue defeated world chess champion Garry Kasparov. This event demonstrated the potential of AI in complex strategic decision-making.

2011 – AI Wins a Quiz Competition

IBM created Watson, an AI system that won the popular quiz show Jeopardy! by competing against human champions. This highlighted the growing power of AI in natural language understanding.

2016 – AI Beats a Go Champion

An AI system developed by Google called AlphaGo defeated world champion Lee Sedol in the complex board game Go. This milestone demonstrated the remarkable progress of AI in strategic learning.

2020s – Rapid Growth of AI Technologies

Modern AI systems developed by organizations such as OpenAI and Microsoft are capable of generating text, images, and code, powering advanced applications like chatbots, automation tools, and intelligent assistants.

Learn more about Generative AI

Why the History of AI Matters

Studying the history of artificial intelligence helps us understand how technological breakthroughs and research innovations have shaped modern AI systems. Each milestone has contributed to the development of smarter algorithms, more powerful computing systems, and practical applications that impact everyday life.

As AI continues to evolve, it is expected to play an even greater role in industries such as healthcare, education, finance, and transportation.

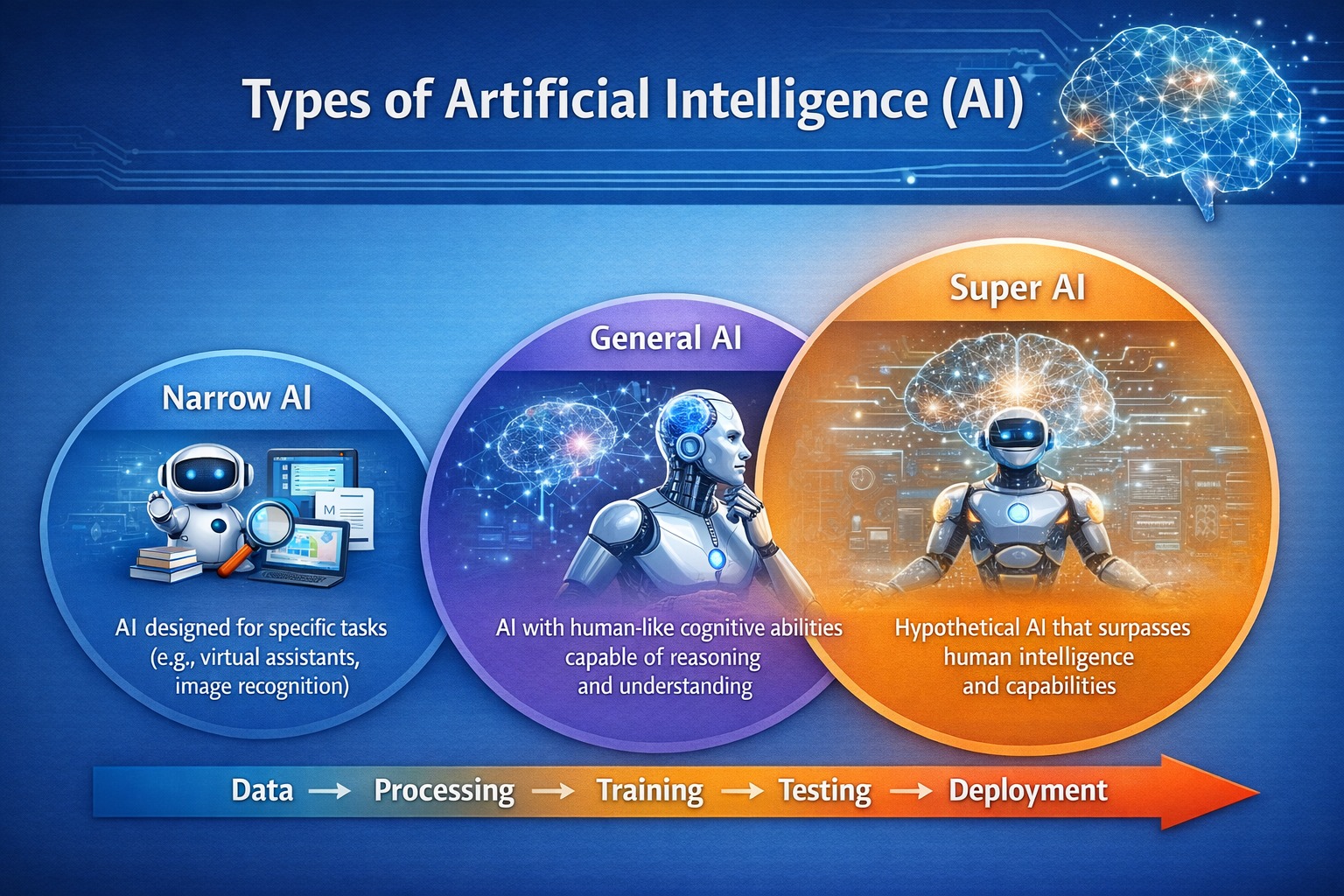

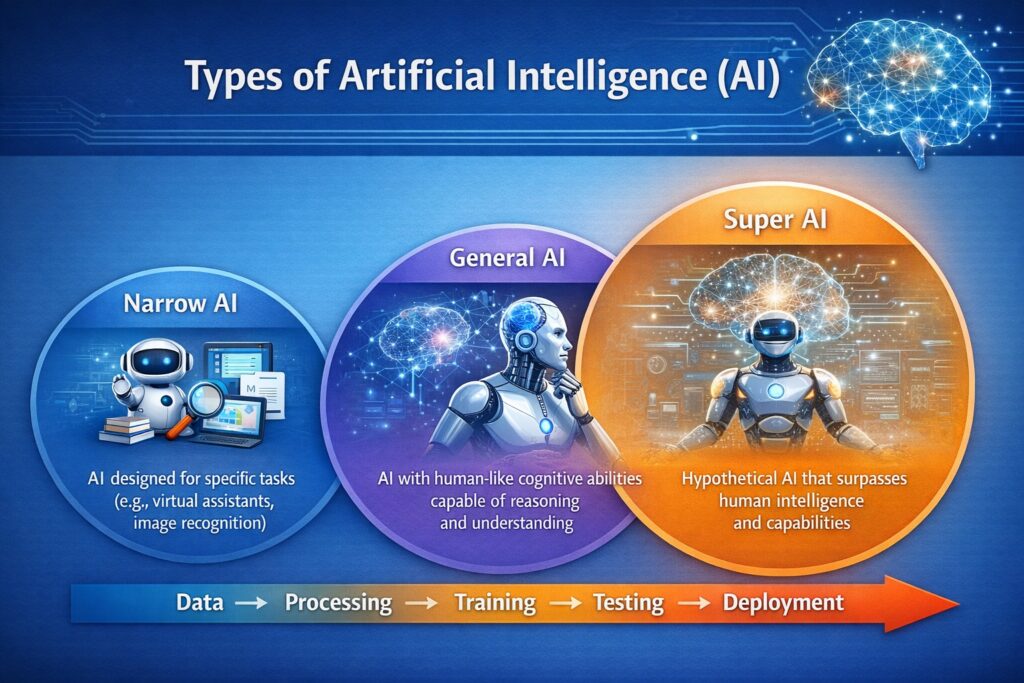

Types of Artificial Intelligence

Artificial Intelligence can be classified into different types based on its capabilities and functional characteristics. Understanding these types helps explain how AI systems are designed and how they are used in real-world applications.

Modern AI technologies such as Machine Learning, Deep Learning, and Natural Language Processing power many of these systems and enable machines to perform intelligent tasks.

AI is generally divided into three major types based on capability.

Types of Artificial Intelligence Based on Capability

| Type of AI | Description | Example Applications |

| Narrow AI | AI designed to perform a specific task efficiently | Voice assistants, recommendation systems |

| General AI | AI that can understand and perform any intellectual task similar to humans | Still under research |

| Super AI | Hypothetical AI that surpasses human intelligence in all aspects | Future concept |

1. Narrow Artificial Intelligence (Weak AI)

Narrow AI, also known as Weak AI, is the most common type of artificial intelligence used today. These systems are designed to perform specific tasks and operate within a limited range of functions.

Examples include:

- Virtual assistants like Google Assistant

- Recommendation algorithms used by Amazon

- Image recognition systems used in social media platforms

Although Narrow AI can perform tasks extremely well, it cannot think or operate beyond its programmed capabilities.

2. Artificial General Intelligence (AGI)

Artificial General Intelligence refers to AI systems that can understand, learn, and perform any intellectual task that a human can do. Unlike Narrow AI, AGI would be capable of reasoning, planning, problem-solving, and adapting to new situations.

Researchers and technology organizations such as OpenAI and Microsoft are actively exploring advancements that could lead to the development of AGI.

However, true AGI has not yet been achieved and remains an important goal in AI research.

3. Artificial Super Intelligence (ASI)

Artificial Super Intelligence is a theoretical form of AI that would surpass human intelligence in every field, including creativity, problem-solving, and decision-making.

If developed, ASI could potentially outperform humans in areas such as scientific discovery, technological innovation, and complex strategic planning.

Although ASI remains hypothetical, experts believe it could represent the future evolution of artificial intelligence if technological progress continues at its current pace.

Types of Artificial Intelligence Based on Functionality

AI can also be categorized based on how systems operate and interact with the environment.

Reactive Machines

Reactive machines are the most basic AI systems. They respond to specific inputs but do not store memories or learn from past experiences.

Limited Memory AI

These AI systems can learn from historical data and improve their decision-making over time. Most modern AI applications, including self-driving cars, use limited memory AI.

Theory of Mind AI

This concept refers to AI systems that could understand human emotions, beliefs, and intentions. Such systems are still under development.

Self-Aware AI

Self-aware AI represents the most advanced stage of artificial intelligence where machines would possess consciousness and self-awareness. This concept remains purely theoretical.

Applications of Artificial Intelligence in Real Life

Artificial Intelligence has rapidly transformed many industries by enabling machines to analyze data, automate tasks, and make intelligent decisions. Today, AI technologies such as Machine Learning, Deep Learning, and Natural Language Processing power countless real-world applications that improve efficiency, productivity, and user experience.

From healthcare and finance to transportation and education, artificial intelligence is now integrated into many aspects of everyday life.

1. Artificial Intelligence in Healthcare

AI is transforming healthcare by helping doctors diagnose diseases more accurately and efficiently. AI-powered systems can analyze medical images, detect patterns in patient data, and assist in treatment planning.

For example, research organizations such as Google DeepMind have developed AI models capable of identifying medical conditions from imaging data with high accuracy.

AI applications in healthcare include:

- Disease diagnosis

- Drug discovery

- Medical imaging analysis

- Personalized treatment recommendations

2. Artificial Intelligence in Finance

Financial institutions use AI to analyze large amounts of financial data, detect fraudulent transactions, and improve investment strategies. AI algorithms can identify unusual patterns in transactions and help banks prevent financial fraud.

Companies like PayPal and Mastercard use AI technologies to strengthen fraud detection systems and enhance transaction security.

3. Artificial Intelligence in Transportation

AI plays a major role in modern transportation systems, especially in the development of autonomous vehicles and intelligent traffic management systems.

Self-driving technologies developed by companies such as Tesla rely on AI algorithms to analyze sensor data, recognize objects, and make real-time driving decisions.

AI applications in transportation include:

- Self-driving cars

- Traffic prediction systems

- Smart navigation and route optimization

4. Artificial Intelligence in E-Commerce

Online businesses use AI to understand customer behavior and provide personalized shopping experiences. AI-powered recommendation systems analyze browsing history, purchase patterns, and user preferences.

For example, e-commerce platforms like Amazon use AI algorithms to recommend products and improve customer engagement.

5. Artificial Intelligence in Education

AI is also transforming the education sector by enabling personalized learning experiences and intelligent tutoring systems. AI-powered platforms can analyze student performance and recommend customized learning materials.

Educational tools such as Duolingo use AI to adapt lessons according to a learner’s progress and learning style.

6. Artificial Intelligence in Customer Service

Many companies use AI chatbots and virtual assistants to automate customer support and respond to user inquiries quickly.

AI systems like ChatGPT can understand natural language queries and generate helpful responses, improving customer service efficiency.

Why AI Applications Are Growing Rapidly

The widespread adoption of artificial intelligence is driven by several factors:

- Increasing availability of data

- Improvements in computing power

- Advancements in AI algorithms

- Growing demand for automation

As these technologies continue to evolve, artificial intelligence is expected to play an even greater role in shaping industries and solving complex global challenges.

Advantages and Disadvantages of Artificial Intelligence

Artificial Intelligence has become one of the most influential technologies in the modern world. By combining advanced algorithms, data processing, and technologies like Machine Learning, Deep Learning, and Natural Language Processing, AI systems can automate tasks, analyze complex data, and assist humans in decision-making.

However, while AI offers many benefits, it also presents several challenges and concerns that must be carefully addressed.

Advantages of Artificial Intelligence

Artificial intelligence provides numerous benefits across industries and everyday life. Some of the most important advantages include:

1. Automation of Repetitive Tasks

AI can automate routine and repetitive tasks, allowing businesses to increase efficiency and reduce human workload. This helps employees focus on more creative and strategic activities.

2. Improved Accuracy and Reduced Errors

AI systems can process large amounts of data with high precision, minimizing the risk of human errors in tasks such as data analysis, diagnostics, and financial calculations.

3. Faster Decision-Making

AI algorithms analyze information quickly and generate insights that help organizations make faster and more informed decisions.

4. 24/7 Availability

Unlike humans, AI-powered systems can operate continuously without fatigue. Virtual assistants and chatbots such as ChatGPT can provide customer support at any time of the day.

5. Innovation in Multiple Industries

Artificial intelligence is driving innovation in sectors such as healthcare, finance, transportation, and education. Companies like Google and Microsoft are continuously developing AI technologies that improve products and services.

Disadvantages of Artificial Intelligence

Despite its benefits, artificial intelligence also presents certain risks and limitations.

1. Job Displacement

Automation powered by AI may replace certain types of jobs, particularly those involving repetitive or routine tasks. This raises concerns about workforce adaptation and employment opportunities.

2. High Development Costs

Developing advanced AI systems requires significant investments in computing infrastructure, research, and specialized expertise.

3. Ethical and Privacy Concerns

AI systems often rely on large datasets, which can raise concerns about data privacy, surveillance, and ethical use of technology.

4. Dependence on Technology

Heavy reliance on AI systems may reduce human skills and increase vulnerability if systems fail or malfunction.

5. Limited Creativity and Emotional Understanding

While AI can analyze data and recognize patterns, it still lacks true human creativity, emotional intelligence, and moral judgment.

Comparison: Advantages vs Disadvantages of Artificial Intelligence

| Advantages | Disadvantages |

| Automates repetitive tasks | May cause job displacement |

| High accuracy and efficiency | Expensive to develop and maintain |

| Faster data analysis and decision-making | Ethical and privacy concerns |

| Works continuously without fatigue | Heavy dependence on technology |

| Drives innovation across industries | Limited emotional and creative intelligence |

Why Understanding AI Benefits and Risks Is Important

Understanding both the advantages and disadvantages of artificial intelligence helps organizations and policymakers develop responsible strategies for adopting AI technologies. By balancing innovation with ethical considerations, AI can be used to create solutions that benefit society while minimizing potential risks.

Future of Artificial Intelligence (2025–2035 Predictions)

Artificial Intelligence is expected to play an increasingly important role in shaping the future of technology, business, and society. Rapid advancements in computing power, data availability, and research in fields like Machine Learning, Deep Learning, and Natural Language Processing are accelerating the development of smarter and more capable AI systems.

Experts believe that the next decade will bring significant breakthroughs in artificial intelligence, enabling machines to perform complex tasks, assist in scientific discoveries, and transform industries around the world.

1. AI-Powered Automation Across Industries

In the coming years, artificial intelligence will automate many repetitive and data-driven tasks across sectors such as manufacturing, healthcare, finance, and logistics.

Organizations like Microsoft and Google are already integrating AI into cloud platforms and enterprise software to improve productivity and decision-making.

Future AI systems may help businesses:

- automate operational processes

- analyze massive datasets instantly

- optimize supply chains

- improve customer experiences

2. Advances Toward Artificial General Intelligence

Researchers are actively exploring the development of Artificial General Intelligence (AGI), a type of AI capable of performing intellectual tasks at a human level.

Companies such as OpenAI are investing heavily in research to build more advanced AI systems capable of reasoning, learning, and adapting to new challenges.

Although AGI has not yet been achieved, many experts believe it could become a reality in the coming decades.

3. AI in Healthcare and Scientific Discovery

Artificial intelligence is expected to significantly accelerate medical research and healthcare innovation.

AI systems will likely assist doctors in diagnosing diseases earlier, discovering new drugs faster, and personalizing treatments based on patient data.

Research organizations like Google DeepMind are already using AI to study complex biological problems and develop solutions that could improve global health outcomes.

4. Smarter Robotics and Autonomous Systems

Future AI technologies will power advanced robotics capable of performing complex physical tasks. Autonomous vehicles, drones, and industrial robots will become more intelligent and efficient.

Companies such as Tesla are developing autonomous driving technologies that rely heavily on AI algorithms and sensor data to navigate real-world environments.

5. Ethical AI and Responsible Development

As artificial intelligence becomes more powerful, governments and organizations will increasingly focus on ethical AI development. Issues such as data privacy, algorithmic bias, and responsible AI governance will become central to global technology policies.

Technology leaders and research institutions are working to create frameworks that ensure AI systems are transparent, fair, and aligned with human values.

6. AI Transforming Everyday Life

In the future, AI will become even more integrated into daily life. Intelligent assistants, personalized education platforms, smart homes, and advanced digital services will rely on AI to provide highly customized experiences.

Applications powered by AI systems like ChatGPT demonstrate how machines can interact with humans using natural language and assist with tasks ranging from writing to problem-solving.

What the Future of AI Means for Society

The continued evolution of artificial intelligence will bring both opportunities and challenges. While AI has the potential to increase productivity, accelerate innovation, and improve quality of life, it will also require careful regulation and responsible development.

By combining technological progress with ethical considerations, artificial intelligence can help address some of the world’s most complex problems and shape a smarter, more connected future.

Artificial Intelligence Statistics (2025–2026)

Artificial Intelligence continues to grow rapidly, becoming one of the most important technologies shaping the global economy. Breakthroughs in fields such as Machine Learning, Deep Learning, and Natural Language Processing are accelerating the adoption of AI across industries worldwide.

Below are some recent statistics and trends for 2025–2026 that highlight the expanding influence of artificial intelligence.

Key AI Statistics (2025–2026)

• The global artificial intelligence market is projected to reach over $300 billion by 2026, with continued rapid growth expected in the following decade.

• More than 75% of businesses worldwide are expected to integrate AI technologies into their operations by 2026.

• AI-driven automation could contribute over $15 trillion to the global economy by 2030, making it one of the most impactful technologies of the century.

• Around 80% of enterprises are currently experimenting with or actively deploying AI-powered solutions.

• Major technology companies such as OpenAI, Microsoft, and Google are investing billions of dollars in AI infrastructure, research, and development.

• AI-powered tools like ChatGPT are transforming industries including education, marketing, software development, and customer service.

Why These 2025–2026 Statistics Matter

These recent statistics demonstrate how artificial intelligence is rapidly evolving from a research field into a critical business and technology infrastructure. Organizations around the world are adopting AI to increase efficiency, automate operations, and gain competitive advantages in data-driven decision-making.

As advancements continue, artificial intelligence is expected to play an even greater role in innovation, productivity, and digital transformation over the next decade.